The article in Nature (23Mar06 issue),

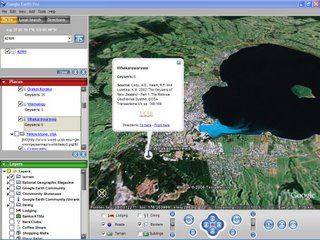

2020 Computing: Everything, Everywhere by Declan Butler, discusses the proliferation of sensor networks and the expected, building data flood. An important issue only briefly mentioned in the otherwise excellent article is the need to handle vast amounts of data and produce meaningful products. Infinite data are often similar to no data at all. In fact, so much data already are created and collected, that many are effectively discarded. In a talk at the University of California San Diego, Google Earth's Chief Technical Officer Michael Jones described conversations with NASA on their vast planetary imagery library; most is not used or visualized, but simply stored. The challenge is to not simply create, but to organize and archive data in a meaningful way. The solution--possibly invisible to the human user--requires a metadata infrastructure where specific queries by both humans and computers can parse input.

Elsewhere in the same Nature issue, a commentary entitled

2020 Computing: Exceeding Human Limits, Stephen Muggleton outlines some of the issues associated with automation and vast amounts of data.

At UCSB, we have several scientists interested in "Digital Earth."

Digital Earth was defined by Gore in 1999 as a "multi-resolution, three-dimensional representation of the planet, into which we can embed vast quantities of geo-referenced data." Digital Earth could also be defined by Butler's article title, "Everything, Everywhere." Most agree that despite progress in data creation and virtual globes technology, Digital Earth does not yet exist. Until recently, I would have said mechanisms for adequate visualization were missing; Google Earth, ArcGIS Explorer, and other virtual globes have largely alleviated that issue. Now, perhaps the most-significant challenge is looming. In my view, the reason Digital Earth has not come to fruition is because we are missing the set of algorithms to associate uncontexualized data and concepts with space and time. Consider the volumes within a library. A vast amount of unusable data exist within the bookshelves--even if the volumes were digitized. The problem is how to associate the various internal references to one another and to concepts, then how to create from these results appropriate representation within space-time. Approaching similar issues is the subject of at least two ongoing UCSB geography dissertations.

Finding solutions for metadata, context algorithms, data mining, and spatiotemporal representation is not the exclusive purview of Google, Microsoft, and the private sector. Nor are these problems unrealized by other scientists; for instance, the recent report,

Priorities for GEOINT Research at the National Geospatial-Intelligence Agency, discusses several of these questions, including data proliferation, mining, and visualization--all hold particular relevance to spatial scientists. Such data issues are general, and faced by all interests of a computing-based, science-driven society. Geographers should equip for the challenge.